Deploying a cluster on bare metal

- Single-cluster Pulsar installations should be sufficient for all but the most ambitious use cases. If you're interested in experimenting with Pulsar or using it in a startup or on a single team, we recommend opting for a single cluster. If you do need to run a multi-cluster Pulsar instance, however, see the guide here.

- If you want to use all builtin Pulsar IO connectors in your Pulsar deployment, you need to download

apache-pulsar-io-connectorspackage and make sure it is installed underconnectorsdirectory in the pulsar directory on every broker node or on every function-worker node if you have run a separate cluster of function workers for Pulsar Functions. - If you want to use Tiered Storage feature in your Pulsar deployment, you need to download

apache-pulsar-offloaderspackage and make sure it is installed underoffloadersdirectory in the pulsar directory on every broker node. For more details of how to configure this feature, you could reference this Tiered storage cookbook.

Deploying a Pulsar cluster involves doing the following (in order):

- Deploying a ZooKeeper cluster (optional)

- Initializing cluster metadata

- Deploying a BookKeeper cluster

- Deploying one or more Pulsar brokers

Preparation

Requirements

Currently, Pulsar is available for 64-bit macOS, Linux, and Windows. To use Pulsar, you need to install 64-bit JRE/JDK 8 or later versions.

If you already have an existing ZooKeeper cluster and would like to reuse it, you don't need to prepare the machines for running ZooKeeper.

To run Pulsar on bare metal, you are recommended to have:

- At least 6 Linux machines or VMs

- 3 running ZooKeeper

- 3 running a Pulsar broker, and a BookKeeper bookie

- A single DNS name covering all of the Pulsar broker hosts

However if you don't have enough machines, or are trying out Pulsar in cluster mode (and expand the cluster later), you can even deploy Pulsar in one node, where it will run zookeeper, bookie and broker in same machine.

Each machine in your cluster will need to have Java 8 or higher installed.

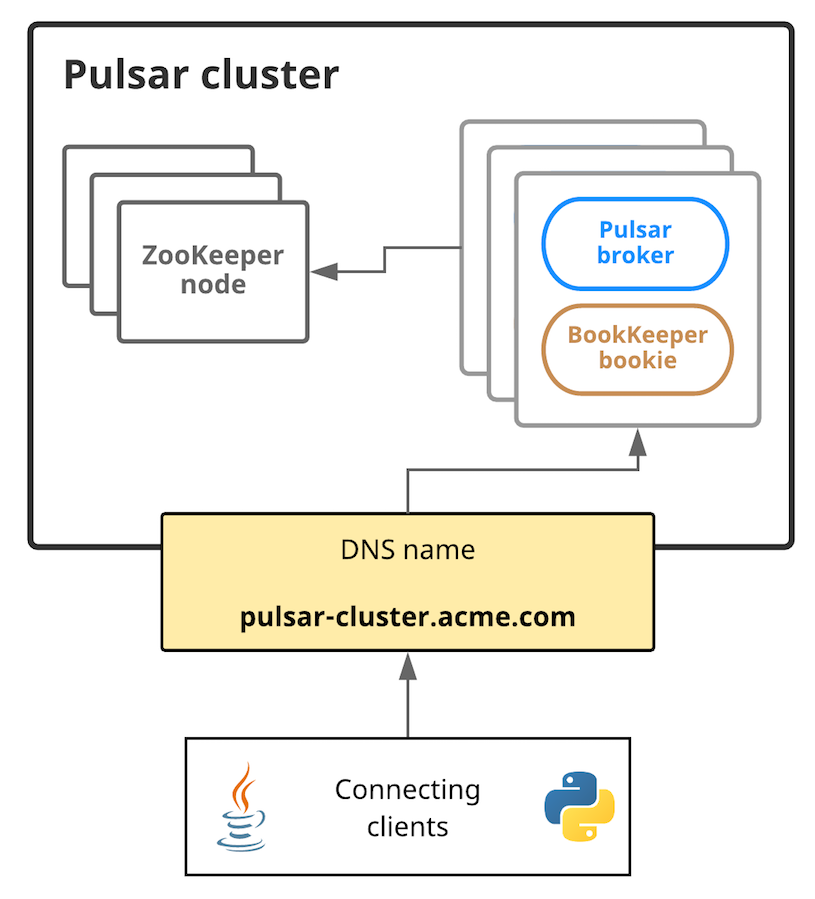

Here's a diagram showing the basic setup:

In this diagram, connecting clients need to be able to communicate with the Pulsar cluster using a single URL, in this case pulsar-cluster.acme.com, that abstracts over all of the message-handling brokers. Pulsar message brokers run on machines alongside BookKeeper bookies; brokers and bookies, in turn, rely on ZooKeeper.

Hardware considerations

When deploying a Pulsar cluster, we have some basic recommendations that you should keep in mind when capacity planning.

ZooKeeper

For machines running ZooKeeper, we recommend using lighter-weight machines or VMs. Pulsar uses ZooKeeper only for periodic coordination- and configuration-related tasks, not for basic operations. If you're running Pulsar on Amazon Web Services (AWS), for example, a t2.small instance would likely suffice.

Bookies & Brokers

For machines running a bookie and a Pulsar broker, we recommend using more powerful machines. For an AWS deployment, for example, i3.4xlarge instances may be appropriate. On those machines we also recommend:

- Fast CPUs and 10Gbps NIC (for Pulsar brokers)

- Small and fast solid-state drives (SSDs) or hard disk drives (HDDs) with a RAID controller and a battery-backed write cache (for BookKeeper bookies)

Installing the Pulsar binary package

You'll need to install the Pulsar binary package on each machine in the cluster, including machines running ZooKeeper and BookKeeper.

To get started deploying a Pulsar cluster on bare metal, you'll need to download a binary tarball release in one of the following ways:

- By clicking on the link directly below, which will automatically trigger a download:

- From the Pulsar downloads page

- From the Pulsar releases page on GitHub

- Using wget:

$ wget https://archive.apache.org/dist/pulsar/pulsar-2.3.0/apache-pulsar-2.3.0-bin.tar.gz

Once you've downloaded the tarball, untar it and cd into the resulting directory:

$ tar xvzf apache-pulsar-2.3.0-bin.tar.gz

$ cd apache-pulsar-2.3.0

The untarred directory contains the following subdirectories:

| Directory | Contains |

|---|---|

bin | Pulsar's command-line tools, such as pulsar and pulsar-admin |

conf | Configuration files for Pulsar, including for broker configuration, ZooKeeper configuration, and more |

data | The data storage directory used by ZooKeeper and BookKeeper. |

lib | The JAR files used by Pulsar. |

logs | Logs created by the installation. |

Installing Builtin Connectors (optional)

Since release

2.1.0-incubating, Pulsar releases a separate binary distribution, containing all thebuiltinconnectors. If you would like to enable thosebuiltinconnectors, you can follow the instructions as below; otherwise you can skip this section for now.

To get started using builtin connectors, you'll need to download the connectors tarball release on every broker node in one of the following ways:

-

by clicking the link below and downloading the release from an Apache mirror:

-

from the Pulsar downloads page

-

from the Pulsar releases page

-

using wget:

$ wget https://archive.apache.org/dist/pulsar/pulsar-2.3.0/connectors/{connector}-2.3.0.nar

Once the nar file is downloaded, copy the file to directory connectors in the pulsar directory,

for example, if the connector file pulsar-io-aerospike-2.3.0.nar is downloaded:

$ mkdir connectors

$ mv pulsar-io-aerospike-2.3.0.nar connectors

$ ls connectors

pulsar-io-aerospike-2.3.0.nar

...

Installing Tiered Storage Offloaders (optional)

Since release

2.2.0, Pulsar releases a separate binary distribution, containing the tiered storage offloaders. If you would like to enable tiered storage feature, you can follow the instructions as below; otherwise you can skip this section for now.

To get started using tiered storage offloaders, you'll need to download the offloaders tarball release on every broker node in one of the following ways:

-

by clicking the link below and downloading the release from an Apache mirror:

-

from the Pulsar downloads page

-

from the Pulsar releases page

-

using wget:

$ wget https://archive.apache.org/dist/pulsar/pulsar-2.3.0/apache-pulsar-offloaders-2.3.0-bin.tar.gz

Once the tarball is downloaded, in the pulsar directory, untar the offloaders package and copy the offloaders as offloaders

in the pulsar directory:

$ tar xvfz apache-pulsar-offloaders-2.3.0-bin.tar.gz

// you will find a directory named `apache-pulsar-offloaders-2.3.0` in the pulsar directory

// then copy the offloaders

$ mv apache-pulsar-offloaders-2.3.0/offloaders offloaders

$ ls offloaders

tiered-storage-jcloud-2.3.0.nar

For more details of how to configure tiered storage feature, you could reference this Tiered storage cookbook

Deploying a ZooKeeper cluster

If you already have an existing zookeeper cluster and would like to use it, you can skip this section.

ZooKeeper manages a variety of essential coordination- and configuration-related tasks for Pulsar. To deploy a Pulsar cluster you'll need to deploy ZooKeeper first (before all other components). We recommend deploying a 3-node ZooKeeper cluster. Pulsar does not make heavy use of ZooKeeper, so more lightweight machines or VMs should suffice for running ZooKeeper.

To begin, add all ZooKeeper servers to the configuration specified in conf/zookeeper.conf (in the Pulsar directory you created above). Here's an example:

server.1=zk1.us-west.example.com:2888:3888

server.2=zk2.us-west.example.com:2888:3888

server.3=zk3.us-west.example.com:2888:3888

If you have only one machine to deploy Pulsar, you just need to add one server entry in the configuration file.

On each host, you need to specify the ID of the node in each node's myid file, which is in each server's data/zookeeper folder by default (this can be changed via the dataDir parameter).

See the Multi-server setup guide in the ZooKeeper documentation for detailed info on

myidand more.

On a ZooKeeper server at zk1.us-west.example.com, for example, you could set the myid value like this:

$ mkdir -p data/zookeeper

$ echo 1 > data/zookeeper/myid

On zk2.us-west.example.com the command would be echo 2 > data/zookeeper/myid and so on.

Once each server has been added to the zookeeper.conf configuration and has the appropriate myid entry, you can start ZooKeeper on all hosts (in the background, using nohup) with the pulsar-daemon CLI tool:

$ bin/pulsar-daemon start zookeeper

If you are planning to deploy zookeeper with bookie on the same node, you need to start zookeeper by using different stats port.

Start zookeeper with pulsar-daemon CLI tool like:

$ PULSAR_EXTRA_OPTS="-Dstats_server_port=8001" bin/pulsar-daemon start zookeeper

Initializing cluster metadata

Once you've deployed ZooKeeper for your cluster, there is some metadata that needs to be written to ZooKeeper for each cluster in your instance. It only needs to be written once.

You can initialize this metadata using the initialize-cluster-metadata command of the pulsar CLI tool. This command can be run on any machine in your ZooKeeper cluster. Here's an example:

$ bin/pulsar initialize-cluster-metadata \

--cluster pulsar-cluster-1 \

--zookeeper zk1.us-west.example.com:2181 \

--configuration-store zk1.us-west.example.com:2181 \

--web-service-url http://pulsar.us-west.example.com:8080 \

--web-service-url-tls https://pulsar.us-west.example.com:8443 \

--broker-service-url pulsar://pulsar.us-west.example.com:6650 \

--broker-service-url-tls pulsar+ssl://pulsar.us-west.example.com:6651

As you can see from the example above, the following needs to be specified:

| Flag | Description |

|---|---|

--cluster | A name for the cluster |

--zookeeper | A "local" ZooKeeper connection string for the cluster. This connection string only needs to include one machine in the ZooKeeper cluster. |

--configuration-store | The configuration store connection string for the entire instance. As with the --zookeeper flag, this connection string only needs to include one machine in the ZooKeeper cluster. |

--web-service-url | The web service URL for the cluster, plus a port. This URL should be a standard DNS name. The default port is 8080 (we don't recommend using a different port). |

--web-service-url-tls | If you're using TLS, you'll also need to specify a TLS web service URL for the cluster. The default port is 8443 (we don't recommend using a different port). |

--broker-service-url | A broker service URL enabling interaction with the brokers in the cluster. This URL should use the same DNS name as the web service URL but should use the pulsar scheme instead. The default port is 6650 (we don't recommend using a different port). |

--broker-service-url-tls | If you're using TLS, you'll also need to specify a TLS web service URL for the cluster as well as a TLS broker service URL for the brokers in the cluster. The default port is 6651 (we don't recommend using a different port). |

Deploying a BookKeeper cluster

BookKeeper handles all persistent data storage in Pulsar. You will need to deploy a cluster of BookKeeper bookies to use Pulsar. We recommend running a 3-bookie BookKeeper cluster.

BookKeeper bookies can be configured using the conf/bookkeeper.conf configuration file. The most important step in configuring bookies for our purposes here is ensuring that the zkServers is set to the connection string for the ZooKeeper cluster. Here's an example:

zkServers=zk1.us-west.example.com:2181,zk2.us-west.example.com:2181,zk3.us-west.example.com:2181

Once you've appropriately modified the zkServers parameter, you can provide any other configuration modifications you need. You can find a full listing of the available BookKeeper configuration parameters here, although we would recommend consulting the BookKeeper documentation for a more in-depth guide.

Once you've applied the desired configuration in conf/bookkeeper.conf, you can start up a bookie on each of your BookKeeper hosts. You can start up each bookie either in the background, using nohup, or in the foreground.

To start the bookie in the background, use the pulsar-daemon CLI tool:

$ bin/pulsar-daemon start bookie

To start the bookie in the foreground:

$ bin/bookkeeper bookie

You can verify that a bookie is working properly by running the bookiesanity command for the BookKeeper shell on it:

$ bin/bookkeeper shell bookiesanity

This will create an ephemeral BookKeeper ledger on the local bookie, write a few entries, read them back, and finally delete the ledger.

After you have started all the bookies, you can use simpletest command for BookKeeper shell on any bookie node, to

verify all the bookies in the cluster are up running.

$ bin/bookkeeper shell simpletest --ensemble <num-bookies> --writeQuorum <num-bookies> --ackQuorum <num-bookies> --numEntries <num-entries>

This command will create a num-bookies sized ledger on the cluster, write a few entries, and finally delete the ledger.

Deploying Pulsar brokers

Pulsar brokers are the last thing you need to deploy in your Pulsar cluster. Brokers handle Pulsar messages and provide Pulsar's administrative interface. We recommend running 3 brokers, one for each machine that's already running a BookKeeper bookie.

Configuring Brokers

The most important element of broker configuration is ensuring that that each broker is aware of the ZooKeeper cluster that you've deployed. Make sure that the zookeeperServers and configurationStoreServers parameters. In this case, since we only have 1 cluster and no configuration store setup, the configurationStoreServers will point to the same zookeeperServers.

zookeeperServers=zk1.us-west.example.com:2181,zk2.us-west.example.com:2181,zk3.us-west.example.com:2181

configurationStoreServers=zk1.us-west.example.com:2181,zk2.us-west.example.com:2181,zk3.us-west.example.com:2181

You also need to specify the cluster name (matching the name that you provided when initializing the cluster's metadata:

clusterName=pulsar-cluster-1

If you deploy Pulsar in a one-node cluster, you should update the replication settings in

conf/broker.confto1

# Number of bookies to use when creating a ledger

managedLedgerDefaultEnsembleSize=1

# Number of copies to store for each message

managedLedgerDefaultWriteQuorum=1

# Number of guaranteed copies (acks to wait before write is complete)

managedLedgerDefaultAckQuorum=1

Enabling Pulsar Functions (optional)

If you want to enable Pulsar Functions, you can follow the instructions as below:

-

Edit

conf/broker.confto enable function worker, by settingfunctionsWorkerEnabledtotrue.

functionsWorkerEnabled=true -

Edit

conf/functions_worker.ymland setpulsarFunctionsClusterto the cluster name that you provided when initializing the cluster's metadata.

pulsarFunctionsCluster: pulsar-cluster-1

Starting Brokers

You can then provide any other configuration changes that you'd like in the conf/broker.conf file. Once you've decided on a configuration, you can start up the brokers for your Pulsar cluster. Like ZooKeeper and BookKeeper, brokers can be started either in the foreground or in the background, using nohup.

You can start a broker in the foreground using the pulsar broker command:

$ bin/pulsar broker

You can start a broker in the background using the pulsar-daemon CLI tool:

$ bin/pulsar-daemon start broker

Once you've successfully started up all the brokers you intend to use, your Pulsar cluster should be ready to go!

Connecting to the running cluster

Once your Pulsar cluster is up and running, you should be able to connect with it using Pulsar clients. One such client is the pulsar-client tool, which is included with the Pulsar binary package. The pulsar-client tool can publish messages to and consume messages from Pulsar topics and thus provides a simple way to make sure that your cluster is running properly.

To use the pulsar-client tool, first modify the client configuration file in conf/client.conf in your binary package. You'll need to change the values for webServiceUrl and brokerServiceUrl, substituting localhost (which is the default), with the DNS name that you've assigned to your broker/bookie hosts. Here's an example:

webServiceUrl=http://us-west.example.com:8080/

brokerServiceurl=pulsar://us-west.example.com:6650/

Once you've done that, you can publish a message to Pulsar topic:

$ bin/pulsar-client produce \

persistent://public/default/test \

-n 1 \

-m "Hello Pulsar"

You may need to use a different cluster name in the topic if you specified a cluster name different from

pulsar-cluster-1.

This will publish a single message to the Pulsar topic. In addition, you can subscribe the Pulsar topic in a different terminal before publishing messages as below:

$ bin/pulsar-client consume \

persistent://public/default/test \

-n 100 \

-s "consumer-test" \

-t "Exclusive"

Once the message above has been successfully published to the topic, you should see it in the standard output:

----- got message -----

Hello Pulsar

Running Functions

If you have enabled Pulsar Functions, you can also tryout pulsar functions now.

Create a ExclamationFunction exclamation.

bin/pulsar-admin functions create \

--jar examples/api-examples.jar \

--classname org.apache.pulsar.functions.api.examples.ExclamationFunction \

--inputs persistent://public/default/exclamation-input \

--output persistent://public/default/exclamation-output \

--tenant public \

--namespace default \

--name exclamation

Check if the function is running as expected by triggering the function.

bin/pulsar-admin functions trigger --name exclamation --trigger-value "hello world"

You will see output as below:

hello world!